Artificial Intelligence (AI) is a broad field of computer science focused on creating systems or machines that exhibit capabilities typically associated with human intelligence.

The field is composed of multiple sub-fields like Machine learning, Deep learning, Natural language processing, Computer vision, Robotics and Automation among others. An AI system typically analyses vast amounts of data to extract patterns and draw conclusions in the hope of being able to recognise those patterns in new data to draw similar conclusions.

Despite genuine breakthroughs in the field, the term “AI” has become a buzzword, often overused or misused. Marketing and product announcements tend to attach the AI label to software that may rely on basic algorithms or simple rule-based systems leading end-users to develop unrealistic expectations of the system’s capabilities.

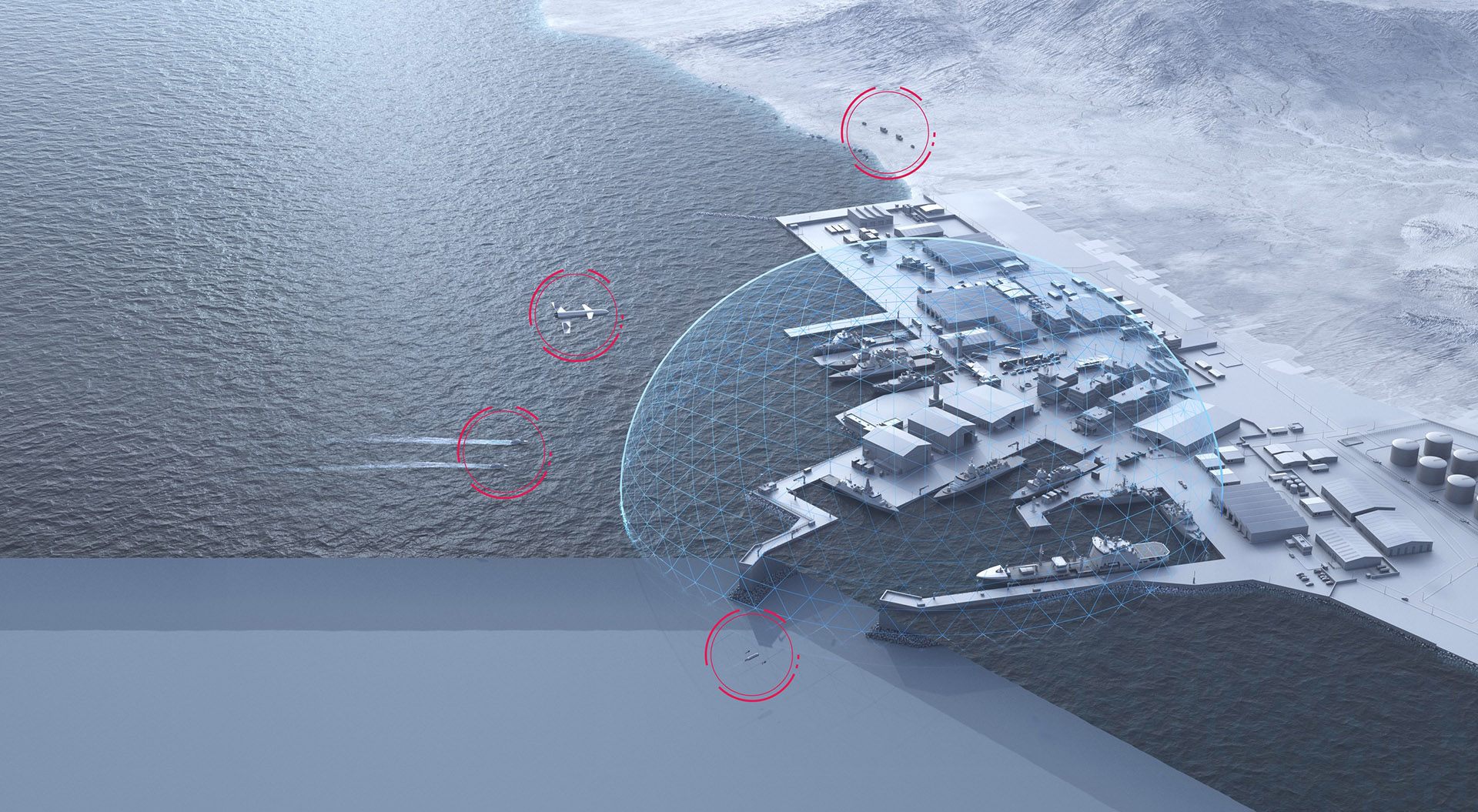

Some of those announcements border on the world of magic solutions, letting end-users assume that AI outputs are always accurate or unbiased. While in reality, AI Models learn from a training dataset which may or may not have been properly generalised for all possible use cases. This overconfidence in the AI outputs can result in a blind trust in the AI system's recommendations, which could inevitably lead to critical errors, especially in high-stake sectors like defense.

The belief that AI will replace human jobs or eliminate the need for human judgement is misleading. AI systems, with their inherent biased nature, require a human in the loop and should be seen as an augmentation tool, more than an automation tool. These systems can rapidly analyse large amounts of data and possibly highlight conclusions and improve situation awareness, but they can not replace skilled personnel. There are also the ethical and legal implications of relying on an AI without proper human verification and accountability, particularly in the defense field.